Articles tagged with #thought leadership

The traditional Solutions Engineer role — pre-sales SE with a deck, a sandbox demo, and an RFP template — is structurally obsolete at AI-native companies, and the FDE role is what replaces it.

Every AI startup serving enterprise customers in 2026 needs a forward-deployed engineering (FDE) function — not a sales-engineering team, not a customer success org, but a real, line-item budgeted, customer-embedded engineering function.

Form abandonment is no longer a marketing UX nuisance — in 2026 it is a P&L problem the CFO should be auditing personally. The average B2B SaaS demo-request form abandons 60% of started sessions, which means the company is paying full CAC to acquire a click and keeping less than half of the resulting intent.

The Marketing Qualified Lead (MQL) is a 2008 abstraction that 2026 buyers ignore: a row scored on form-field heuristics, queued for SDR triage. Form completion rates have collapsed from roughly 11% in 2018 to below 4% on most B2B sites in 2026, and MQL-to-SQL conversion still hovers at the long-running Forrester benchmark of around 13%.

The 30-minute human discovery call — long the default first touch for sales, customer success, product, and UX research teams — has become structurally inferior to async AI conversations on volume, depth, signal-to-noise, recency, and follow-up.

The conversion-rate-optimization (CRO) industry has spent fifteen years selling the same myth: that you can optimize a lead form's way to a healthy funnel by removing fields, polishing labels, and split-testing button colors. The math is now in, and the ceiling is structural — not field-level.

Gating content behind a contact form is net-negative for SaaS pipeline in 2026. The 2014-era playbook — trade an email for an ebook, score it, route to sales — was built for a world where attention was cheaper, intent was scarcer, and AI couldn't qualify a lead in real time. None of that is true anymore.

Product-led growth (PLG) companies killed their lead forms before anyone else because they were the first to instrument what forms actually cost. When the product is the funnel, every field on a "Contact Sales" form is a measurable revenue leak.

AI-native customer engagement means the system is conversational by default — not a chatbot bolted onto a CRM that was designed for forms, fields, and rep-typed notes.

"Human-like" is the wrong North Star for AI customer interviews. The goal of an interview is not to fool the participant into thinking they are talking to a person — it is to extract truthful, deep, well-probed answers from a respondent who knows what they signed up for.

The 8-person focus group should not be improved with AI; it should be replaced. Invented by sociologist Robert K. Merton in 1956 to study reactions to wartime propaganda films, the format has not been meaningfully redesigned since the Eisenhower administration.

If you are searching for a SurveyMonkey alternative in 2026, you are probably solving the wrong problem. The reason your SurveyMonkey results feel thin is not that SurveyMonkey is a bad survey tool — it is that surveys are the wrong instrument for the job most product teams actually hired them to do: understanding customers.

Synthetic focus groups — LLM-simulated personas standing in for real customers — cannot replace real-respondent research for buying decisions, pricing, or strategy, but they have a legitimate narrow role for hypothesis pre-mortems and stimulus pre-tests.

The "AI real estate agent" framing — software that replaces the human agent — is the wrong vision, and the data already shows it. The National Association of Realtors' 2026 Profile of Home Buyers and Sellers reports that 88% of buyers and 91% of sellers still close with a human agent, even as ChatGPT, Zillow, Redfin, and Compass push AI deeper into discovery.

"AI survey" is a contradiction in terms. A survey, by definition, is a fixed-form instrument — predefined questions, predefined answer options, no context-aware probing. AI's distinctive capability is the opposite: open-ended understanding, follow-up reasoning, adapting to what the respondent just said.

"Human-like" is the wrong design target for AI customer interviews. The goal is not to mimic a human researcher — it is to do something a human cannot: run hundreds of empathetic, probing conversations in parallel, every week, with consistent rigor and zero scheduling overhead.

Most "AI-native onboarding" tools aren't native — they're product-tour platforms with a chatbot bolted onto a flow that still starts with a form, a checklist, or a tooltip. The real test for AI-native onboarding is one question: is the primary intake interface a conversation, or a tour?

The thesis: replace surveys with AI — don't augment them. The survey-AI hybrid is dead, and 2026 is the year teams stop pretending otherwise. Three reasons: (1) bolting AI summarization onto SurveyMonkey, Typeform, or Qualtrics still front-loads the schema problem — you only get answers to the questions you thought to…

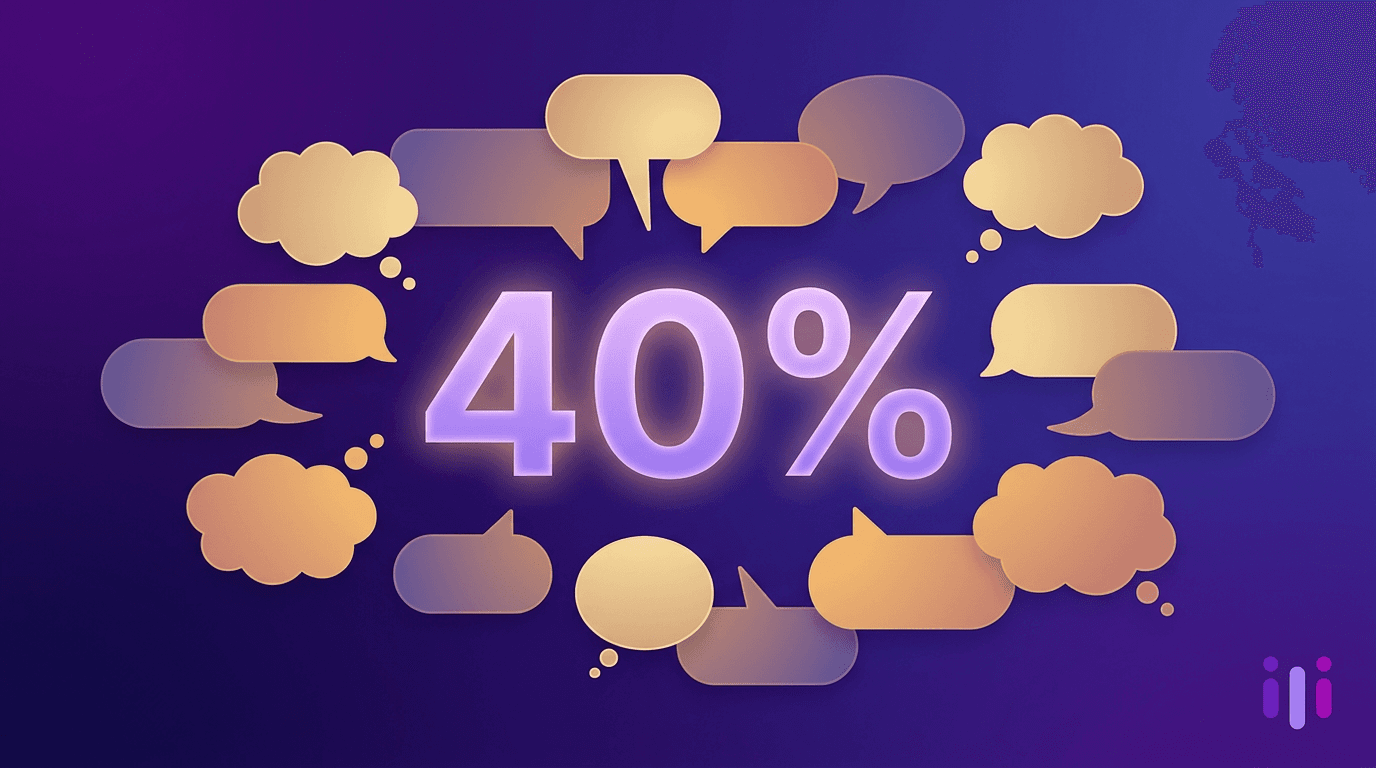

The product-market fit survey — specifically Sean Ellis's "How would you feel if you could no longer use this product?" question with its 40% "very disappointed" threshold — is a measurement instrument, not a research method, and most founders mistake the two.

Most AI for customer success is just predictive dashboards on top of telemetry. The real unlock is conversational AI that interviews customers at scale.

The survey is a legacy data structure from 1932. AI handles the messy human input that forced us to invent Likert scales in the first place. Here's why conversations win.

Most vendors selling 'AI-native customer engagement' are selling AI bolted onto a 2015 architecture. Four tests separate AI-native from AI-bolted-on.

Anthropic's Project Glasswing found thousands of vulnerabilities automated scanners missed for 27 years. Your customer feedback tools have the same blind spot.

Why annual employee surveys miss what matters and how AI-powered conversations capture the context, nuance, and honesty that drive real engagement improvements.

Why traditional student feedback surveys fail to capture what students actually think, and how AI-powered conversations are replacing them with better data and higher engagement.

Why event registration forms drive abandonment and miss attendee intent, and how conversational AI registration captures better data with higher completion rates.

Static intake forms lose 75-81% of prospects before submission. Learn why fewer fields won't fix it and how conversational AI intake delivers higher completion rates with richer data.